Performance Impact of Checkpoint-Restart on Applications

Machine Specifications

| Node Count | CPU Model | CPU Core Info | Memory | IB Card | IB Switch | OS | OFED | Notes |

|---|---|---|---|---|---|---|---|---|

| 128 | Intel E5630 | 2x4 @ 2.53Ghz | 12GB | Mellanox QDR | Mellanox QDR IB Switch | RHEL 6.5 | MOFED 2.2 | A Lustre parallel file system with 4 Object Storage Servers running over native InfiniBand is deployed to write application checkpoints generated by the checkpointing library BLCR v0.8.5. |

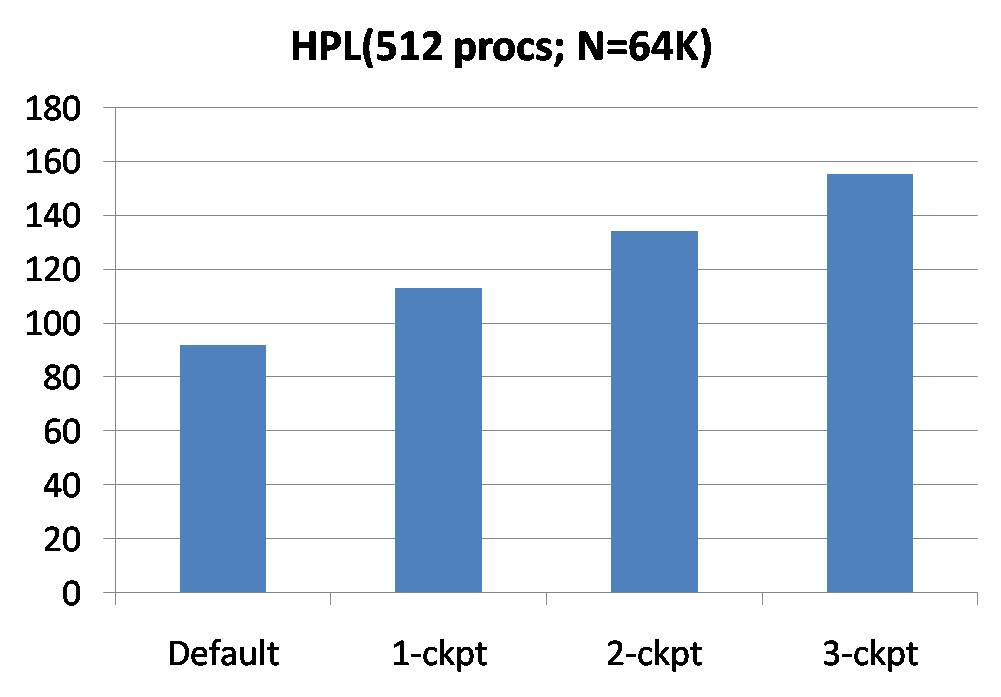

The left side of the bar graph below shows the total runtime of High-Performance Linpack (HPL) application that was run with 512 MPI ranks, with varying number of checkpoint snapshots. For the input size used (N = 64000), the aggregate size of a single checkpoint is ~40GB.

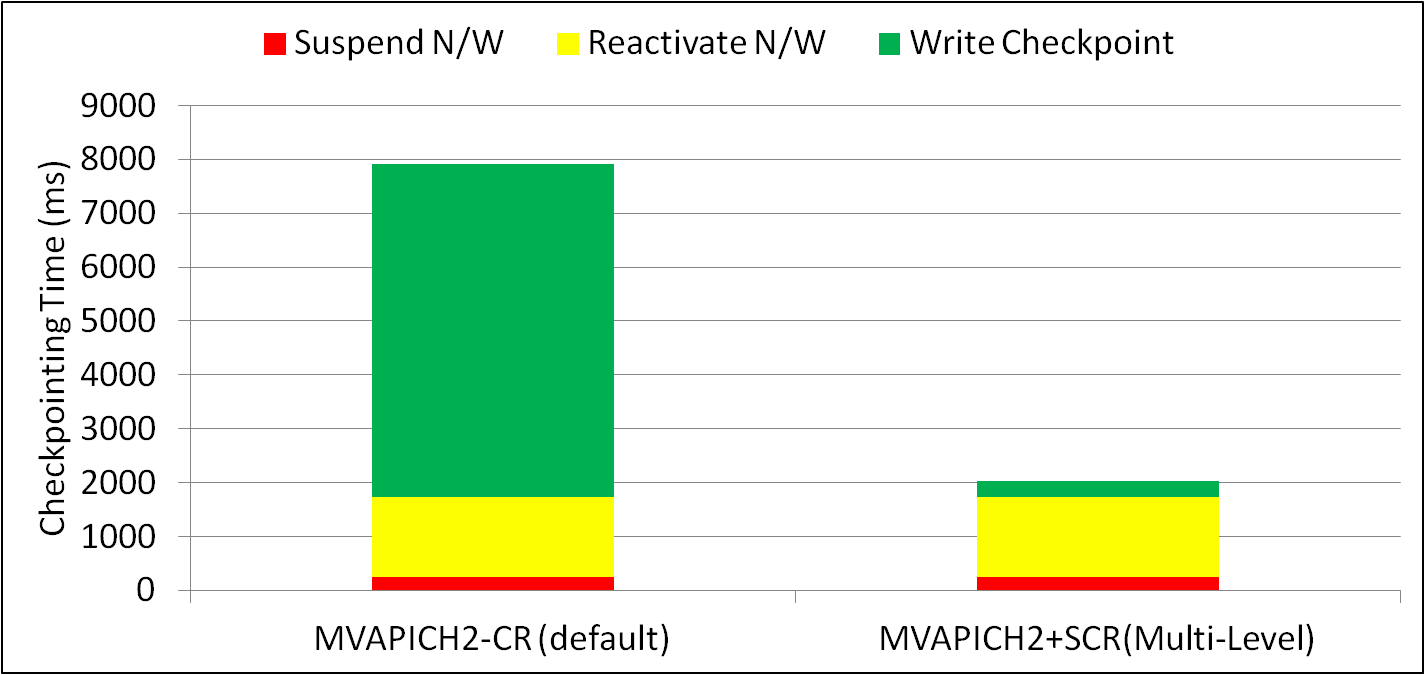

The performance impact of the Checkpoint-Restart mechanism provided with MVAPICH2 is illustrated in the graph below. The ENZO Cosmology simulation is used as a representative application for this purpose. The Radiation Transport sample workload provided with the application was executed using 512 MPI ranks. For the input parameters used, the aggregate size of a single checkpoint is ~13GB. The impact of the default Checkpoint-Restart mechanism in MVAPICH2, and the SCR-assisted multi-level checkpointing mechanism are shown in the graph below.

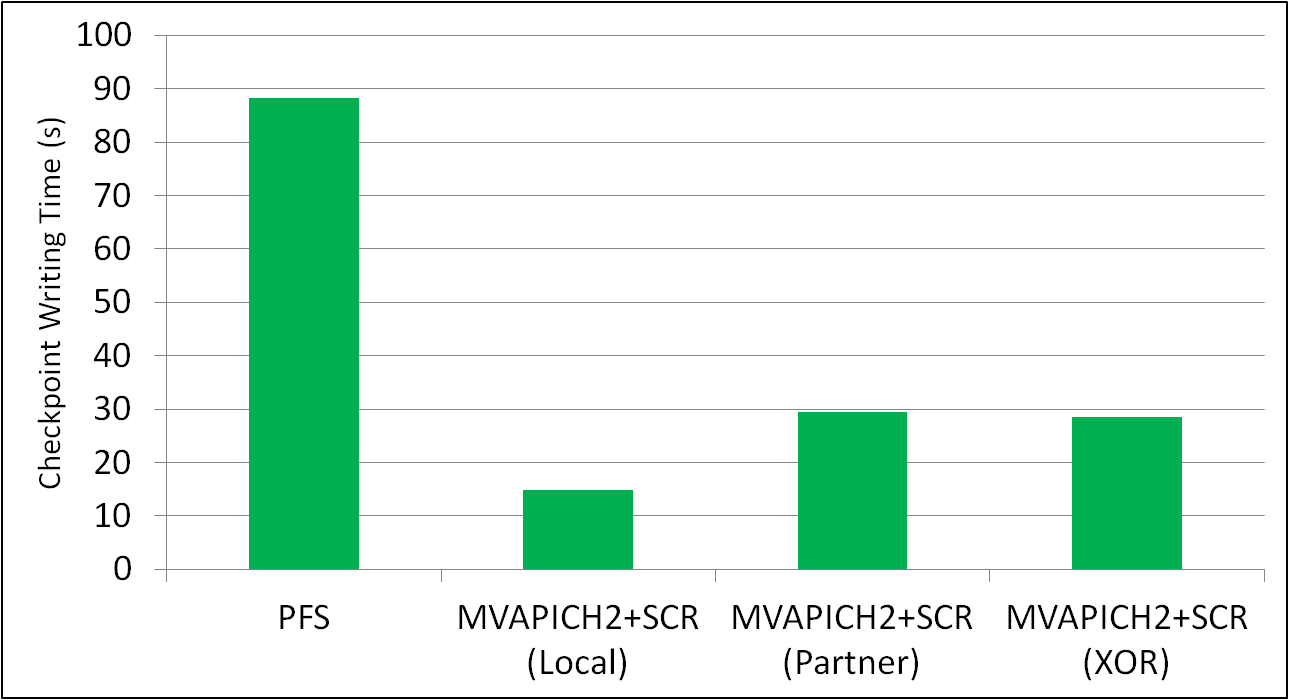

The SCR library implements three redundancy schemes which trade of performance, storage space, and reliability. The graph below compares the checkpointing-writing time of these different schemes against the default model of writing to a parallel file system. The aggregate checkpoint size for each of these runs that use 512 MPI ranks was ~50GB.